State of the World

I’m a solutions architect at Databricks. Every day I need to catch up on Databricks releases, blog posts from Databricks and other key AI industry players, AI industry media coverage, Hacker News, tweets about AI, and a growing list of other sources. That’s a lot, and each morning I had to decide if I was actually going to spend 30–45 minutes just finding the news or cross my fingers that nothing significant happened and go on with my day.

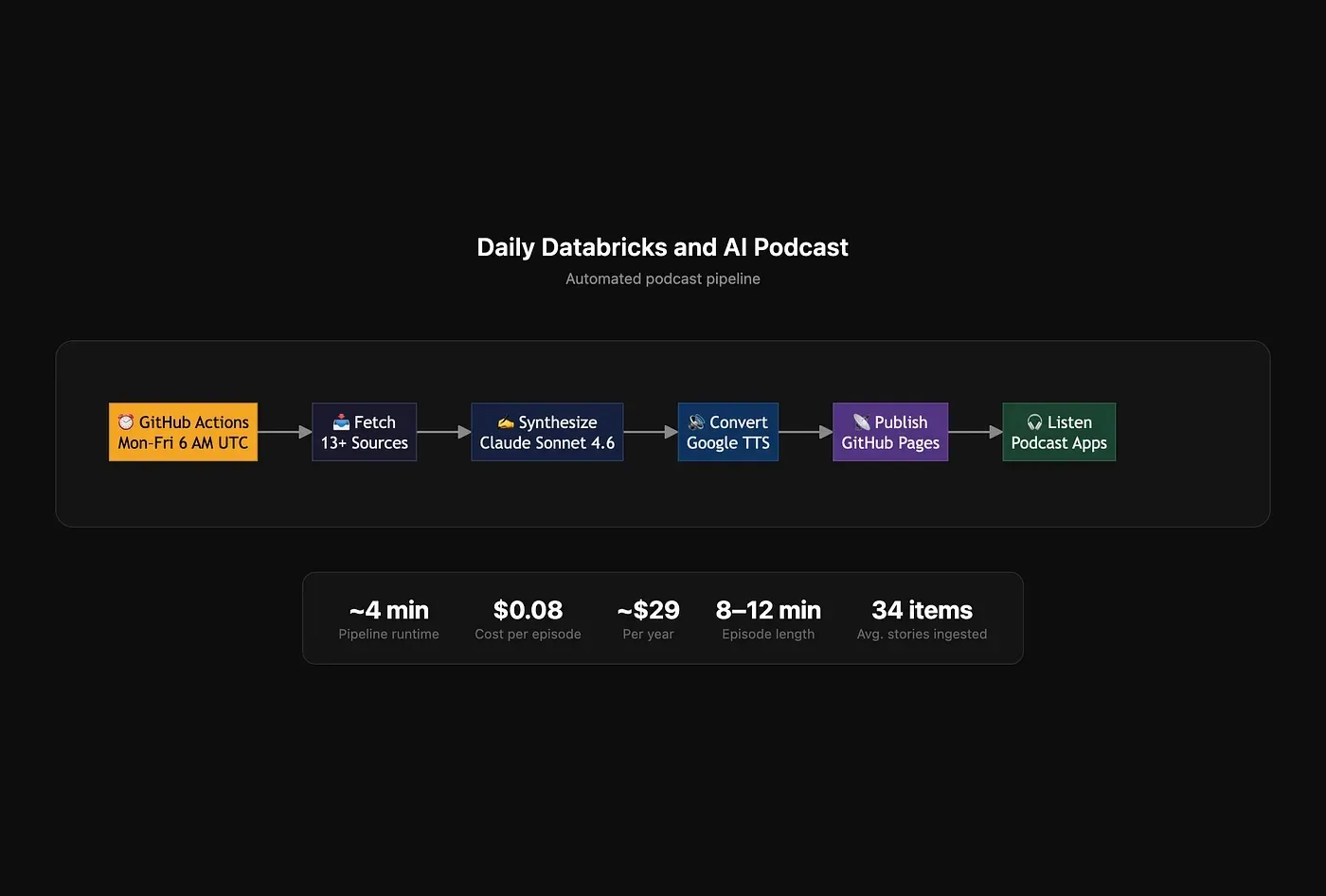

I wanted a personalized 8–12 minute podcast, automatically generated and delivered to my phone every morning.

Since I’m pretty mediocre at coding but pretty good at asking for what I want, I went to Claude Code. Three hours later, it was live on Spotify.

The Approach

Traditional coding: Plan → Write → Debug → Ship (eventually)

Vibe coding: Describe → Run → Fix → Ship (fast)

Claude Code reads errors, debugs issues, and fixes problems in real-time. Instead of writing code, you’re having a conversation about what you want the outputs.

The Build: From Zero to Podcast

Starting Point (10 Minutes)

I wrote a spec file (with help from Claude) laying out what I wanted:

- Scrape 13+ sources

- Send to Claude API for script generation

- Convert to audio with Google TTS

- Publish to GitHub Pages as RSS feed

- Run daily via GitHub Actions

I took that spec, asked Claude Code to implement it, and focused on validating outputs.

Claude created the entire project structure in 10 minutes (you can check out the repo here).

The Error Gauntlet (90 Minutes)

Since this project combined code in my IDE and GitHub with a number of services I had to use through their UIs, it surfaced a lot of failures. Here’s every error I hit, consolidated into themes.

Theme 1: APIs Are Landmines Until You Read the Fine Print

Half the errors in this project came from assuming things about APIs that weren’t true.

Claude Pro ≠ API credits. The very first run failed because a $20/month subscription doesn’t include API access — that’s a separate billing relationship requiring prepaid credits. Twitter’s “free” tier doesn’t let you read timelines. OpenAI’s blog actively blocks scrapers with 403s. Google TTS has a hard 5,000 byte limit per request.

The pattern: every external service has constraints that aren’t obvious until you hit them. The fix is always: read the billing docs before you start (or have Claude Code summarize them for you), and build graceful degradation so one broken source doesn’t sink the pipeline.

Theme 2: The Web Is Actively Hostile to Scrapers

Generic CSS selectors like article, .post, and h2 returned zero results on almost every site. Databricks uses div[data-cy="CtaImageBlock"]. Anthropic uses CSS module classes with generated hashes like __KxYrHG__. OpenAI just returns 403 outright.

The lesson is to build redundancy so that when individual sources fail (and they will), the pipeline continues. Eleven working sources is good enough.

Theme 3: Metadata Bites You at the Finish Line

Three separate errors came from metadata problems that only surfaced during Spotify validation: missing cover art, a missing email address, and artwork hosted in the wrong GitHub branch. These were invisible problems until the very end.

The RSS spec is also a trap. Podcast RSS isn’t just RSS 2.0 — it’s RSS 2.0 plus iTunes extensions. Spotify and Apple Podcasts validate different things. You need <itunes:email> at the channel level and nested inside <itunes:owner> to satisfy both. The spec you read and the validator you face aren’t always aligned.

Theme 4: Math and Encoding Assumptions Will Humiliate You

Two errors came from bad assumptions about how numbers work: episode duration showed 2 minutes instead of 8 because the formula didn’t account for MP3 bitrate math (fileSize × 8 ÷ 128,000), and TTS failed because byte length ≠ character count when you’re dealing with UTF-8.

Theme 5: State Doesn’t Update Just Because Code Does

The subtlest error in the whole project: after adding email tags to the publisher code, the live RSS feed still had the old structure. The publisher only inserted new episodes into existing feeds, but never touched channel metadata. This wasn’t obvious until I inspected the live XML directly.

Code changes and state changes are different things. When your pipeline writes to a persistent artifact (a feed file, a database, a cache), updating the logic that writes it doesn’t retroactively fix what’s already there. Sometimes you have to delete and regenerate from scratch.

Production (5 Minutes)

GitHub Actions workflow was already written. Added API keys as secrets. First automated episode generated the next morning at 5 AM.

Total time: 3 hours.

The Vibe Coding Workflow

-

Start with a Spec (But Don’t Overthink It) — I wrote a 680-line spec with Claude by dictating what I wanted (I mean that literally — I used superwhisper). Claude Code to code. The spec gave context, Claude Code did the work.

-

Run Immediately — Don’t wait for perfect code. Run it. Copy errors. Say “fix this.” Repeat.

-

Let Claude Debug Itself — When scrapers failed, I said “fix the databricks newsroom issue.” Claude inspected pages, found selectors, rewrote code. This saved 30+ minutes of manual HTML inspection.

-

Make Decisions, Don’t Get Stuck — OpenAI 403? Just make it fail gracefully. I have 12 other sources. Ship what works.

-

Ship, Then Iterate — After everything worked end-to-end, I could have added deduplication, transcripts, week-in-review mode. I want to do that, but haven’t yet. I’ll just add it later since there’s no downside to me getting this out now.

What I Learned

Claude Code is a 10x Multiplier

I wrote 0 lines of code in 3 hours. I described what I wanted. The traditional “Think → Write → Debug → Ship” loop became “Think → Describe → Ship → Iterate.”

LLMs Excel at Synthesis

The Claude-generated scripts cluster news into themes, add opinionated commentary, and sound like a real person. I expected robotic output, but this gives some editorial judgment.

Chunking is Universal

I ran into TTS byte limits, LLM token limits, and API rate limits. If you’re building with APIs, you’ll hit these. Be ready to chunk.

GitHub Actions are Underrated

Free cron jobs, built-in secrets, no servers. Perfect for side projects.

The Economics

Development time: 3 hours

Operational costs (estimates):

| Item | Annual Cost |

|---|---|

| Claude API (Sonnet 4.6) | ~$29 |

| Google TTS (Journey-D) | $0 (within free tier) |

| Everything else | $0 |

| Total | ~$29/year |

How to Build Your Own

- Write a simple spec: describe sources, format, schedule

- Pick your stack: scraper, LLM, TTS, hosting, scheduler. Claude can give you suggestions here

- Run locally first: fix issues with “fix this”

- Deploy to GitHub Actions: add cron schedule

- Subscribe: add RSS feed to podcast app, enable auto-download

Final Thoughts

Three years ago, building this would have taken me weeks. With Claude Code, it took 3 hours.

Getting into the motion of building like this has been immensely valuable for me. Instead of thinking of a useful tool and wishing I had the time and expertise to build it, I use these tools that can take my idea and build it for me.

What would you build if coding wasn’t the bottleneck? For me, it’s this 8-minute podcast with really boring thumbnail art that saves me about an hour every morning. That’s really beneficial on its own, but what’s even cooler is that every small project like this I work on spawns several more ideas. More on those later!